An Empirical Study on Anomaly Detection Using Density-based and Representative-based Clustering Algorithms

Keywords:

Outliers, Noise points, ANN, k-means−−, DBSCAN, DBSCAN .Abstract

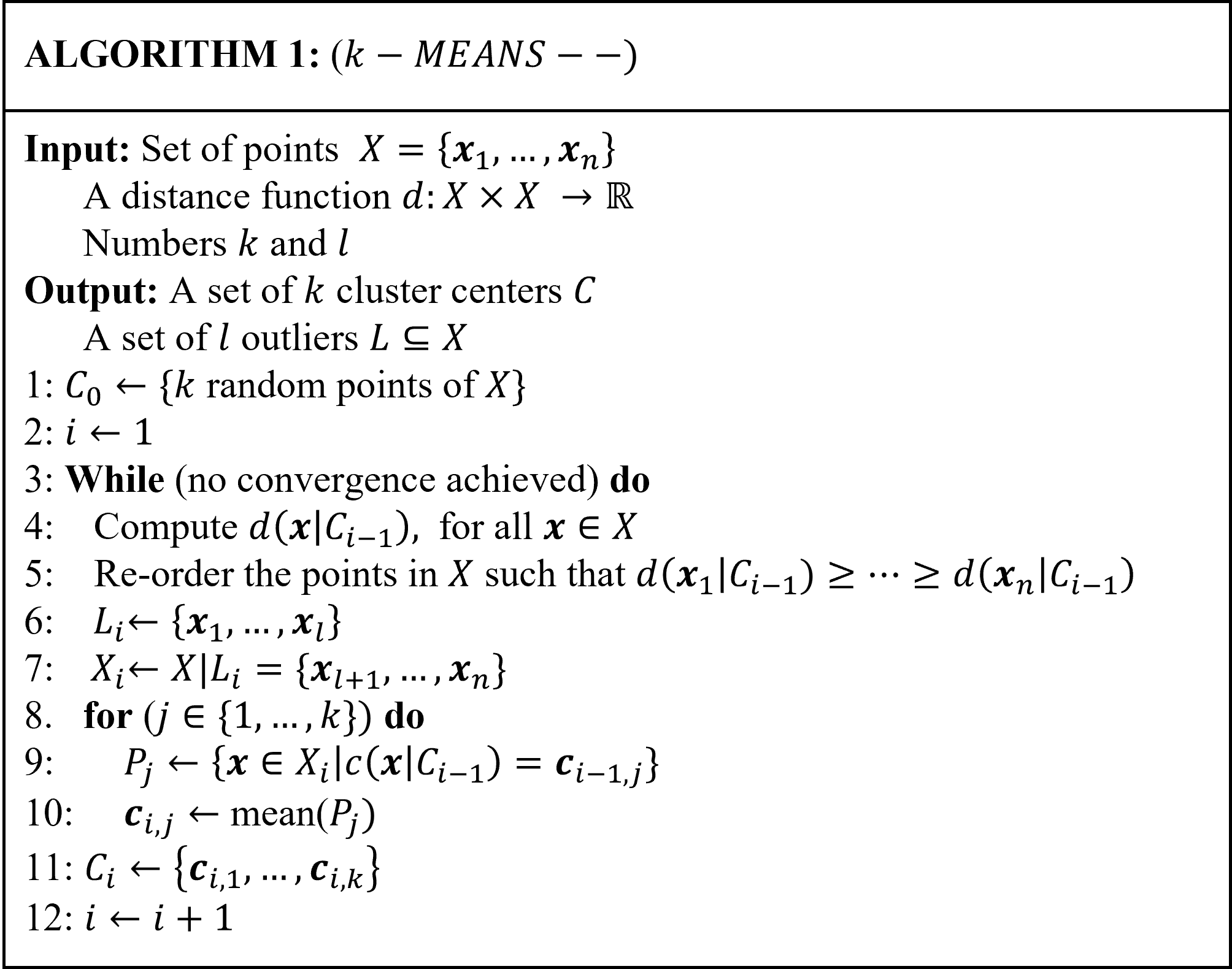

In data mining, and statistics, anomaly detection is the process of finding data patterns (outcomes, values, or observations) that deviate from the rest of the other observations or outcomes. Anomaly detection is heavily used in solving real-world problems in many application domains, like medicine, finance , cybersecurity, banking, networking, transportation, and military surveillance for enemy activities, but not limited to only these fields. In this paper, we present an empirical study on unsupervised anomaly detection techniques such as Density-Based Spatial Clustering of Applications with Noise (DBSCAN), (DBSCAN++) (with uniform initialization, k-center initialization, uniform with approximate neighbor initialization, and $k$-center with approximate neighbor initialization), and $k$-means$--$ algorithms on six benchmark imbalanced data sets. Findings from our in-depth empirical study show that k-means-- is more robust than DBSCAN, and DBSCAN++, in terms of the different evaluation measures (F1-score, False alarm rate, Adjusted rand index, and Jaccard coefficient), and running time. We also observe that DBSCAN performs very well on data sets with fewer number of data points. Moreover, the results indicate that the choice of clustering algorithm can significantly impact the performance of anomaly detection and that the performance of different algorithms varies depending on the characteristics of the data. Overall, this study provides insights into the strengths and limitations of different clustering algorithms for anomaly detection and can help guide the selection of appropriate algorithms for specific applications.

Published

How to Cite

Issue

Section

Copyright (c) 2023 Olumuyiwa James Peter, Gerard Shu Fuhnwi, Janet O. Agbaje, Kayode Oshinubi

This work is licensed under a Creative Commons Attribution 4.0 International License.

How to Cite

Similar Articles

- O. J. Ibidoja, F. P. Shan, Mukhtar, J. Sulaiman, M. K. M. Ali, Robust M-estimators and Machine Learning Algorithms for Improving the Predictive Accuracy of Seaweed Contaminated Big Data , Journal of the Nigerian Society of Physical Sciences: Volume 5, Issue 1, February 2023

- B. I. Akinnukawe, S. A. Okunuga, One-step block scheme with optimal hybrid points for numerical integration of second-order ordinary differential equations , Journal of the Nigerian Society of Physical Sciences: Volume 6, Issue 2, May 2024

- Mark I. Modebei, Olumide O. Olaiya, Ignatius P. Ngwongwo, Computational study of a 3-step hybrid integrators for third order ordinary differential equations with shift of three off-step points , Journal of the Nigerian Society of Physical Sciences: Volume 3, Issue 4, November 2021

- Catherine N. Ogbizi-Ugbe, Osowomuabe Njama-Abang, Samuel Oladimeji, Idongetsit E. Eteng, Edim A. Emanuel, Synergistic intelligence: a novel hybrid model for precision agriculture using k-means, naive Bayes, and knowledge graphs , Journal of the Nigerian Society of Physical Sciences: Volume 8, Issue 1, February 2026

- Segun L. Jegede, Adewale F. Lukman, Kayode Ayinde, Kehinde A. Odeniyi, Jackknife Kibria-Lukman M-Estimator: Simulation and Application , Journal of the Nigerian Society of Physical Sciences: Volume 4, Issue 2, May 2022

- A. B Yusuf, R. M Dima, S. K Aina, Optimized Breast Cancer Classification using Feature Selection and Outliers Detection , Journal of the Nigerian Society of Physical Sciences: Volume 3, Issue 4, November 2021

- Idris Babaji Muhammad, Salisu Usaini, Dynamics of Toxoplasmosis Disease in Cats population with vaccination , Journal of the Nigerian Society of Physical Sciences: Volume 3, Issue 1, February 2021

- Adefunke Bosede Familua, Ezekiel Olaoluwa Omole, Luke Azeta Ukpebor, A Higher-order Block Method for Numerical Approximation of Third-order Boundary Value Problems in ODEs , Journal of the Nigerian Society of Physical Sciences: Volume 4, Issue 3, August 2022

- Maduabuchi Gabriel Orakwelu, Olumuyiwa Otegbeye, Hermane Mambili-Mamboundou, A class of single-step hybrid block methods with equally spaced points for general third-order ordinary differential equations , Journal of the Nigerian Society of Physical Sciences: Volume 5, Issue 4, November 2023

- S. A. Abdullahi, A. G. Habib, N. Hussaini, Mathematical Model of In-host Dynamics of Snakebite Envenoming , Journal of the Nigerian Society of Physical Sciences: Volume 4, Issue 2, May 2022

You may also start an advanced similarity search for this article.