Feature-optimized hybrid CNN–ViT architecture for sustainable vision-based condition assessment in agriculture

Keywords:

Plant disease detection, Hybrid CNN–ViT, Multi-crop classification, Vision transformer, Feature engineeringAbstract

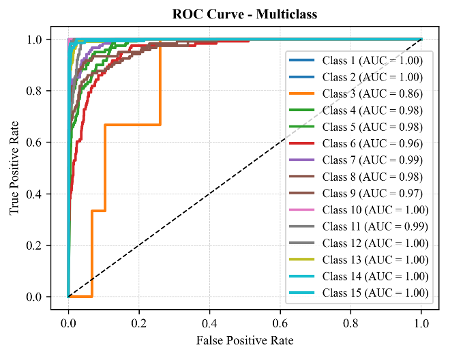

Early detection of structural and physiological changes in plants remains a difficult challenge for computer vision because of large intra-class variation and environmental noise. This paper integrates feature enhancement using Excess Green (ExG) and Excess Red (ExR) vegetation indices with feature compression using principal component analysis (PCA) and an asymmetric convolutional neural network (CNN)--Vision Transformer (ViT) fusion architecture for multi-crop plant-disease classification. Preprocessing involves extracting ExG and ExR, performing statistical normalization, and applying PCA-based feature compression to enhance discriminative ability and reduce redundant spectral information. The CNN component generates hierarchical texture encodings, while the ViT component produces self-attention encodings suited to capturing global associations. The complementary feature spaces are combined through a cross-domain fusion layer to improve representation capability. The proposed system achieves high classification accuracy (98%) and robustness across multiple crop datasets. Although edge efficiency and explainability still need to be addressed before deployment in real-world agricultural scenarios, these aspects are outlined as future work.

Published

How to Cite

Issue

Section

Copyright (c) 2026 Muhammad Musa Liman, Rajesh Prasad, Hauwa Ahmad Amshi (Author)

This work is licensed under a Creative Commons Attribution 4.0 International License.

How to Cite

Similar Articles

- Joshua Sunday, Joel N. Ndam, Lydia J. Kwari, An Accuracy-preserving Block Hybrid Algorithm for the Integration of Second-order Physical Systems with Oscillatory Solutions , Journal of the Nigerian Society of Physical Sciences: Volume 5, Issue 1, February 2023

- K. O. Sodeinde, S. A. Animashaun, H. O. Adubiaro, Methods for the Detection and Remediation of Ammonia from Aquaculture Effluent: A Review , Journal of the Nigerian Society of Physical Sciences: Volume 5, Issue 1, February 2023

- Nneka Ernestina Richard-Nnabu, Chinagolum Ituma, Henry Friday Nweke, Convolutional neural networks method for folded naira currency denominations recognition and analysis , Journal of the Nigerian Society of Physical Sciences: Volume 6, Issue 4, November 2024

- xiaojie zhou, Majid Khan Majahar Ali, Farah Aini Abdullah, Lili Wu, Ying Tian, Tao Li, Kaihui Li, Implementing a dung beetle optimization algorithm enhanced with multi-strategy fusion techniques , Journal of the Nigerian Society of Physical Sciences: Volume 7, Issue 2, May 2025

- Emmanuel Gbenga Dada, Aishatu Ibrahim Birma, Abdulkarim Abbas Gora, Ensemble machine learning algorithm for cost-effective and timely detection of diabetes in Maiduguri, Borno State , Journal of the Nigerian Society of Physical Sciences: Volume 6, Issue 4, November 2024

- Osowomuabe Njama-Abang, Denis U. Ashishie, Paul T. Bukie, Addressing class imbalance in lassa fever epidemic data, using machine learning: a case study with SMOTE and random forest , Journal of the Nigerian Society of Physical Sciences: Volume 7, Issue 3, August 2025

- Shehu Magawata Shagari, Danlami Gabi, Nasiru Muhammad Dankolo, Noah Ndakotsu Gana, Countermeasure to Structured Query Language Injection Attack for Web Applications using Hybrid Logistic Regression Technique , Journal of the Nigerian Society of Physical Sciences: Volume 4, Issue 4, November 2022

- L. O. Afolagboye, Z. O. Arije, A. O. Talabi, O. O. Owoyemi, Effect of Pre-Test Drying Temperature on the Properties of Lateritic Soils. , Journal of the Nigerian Society of Physical Sciences: Volume 5, Issue 1, February 2023

- Muhammad Dahiru Liman, Salamatu Ibrahim Osanga, Esther Samuel Alu, Sa'adu Zakariya, Regularization Effects in Deep Learning Architecture , Journal of the Nigerian Society of Physical Sciences: Volume 6, Issue 2, May 2024

- Gabriel James, Anietie Ekong, Etimbuk Abraham, Enobong Oduobuk, Peace Okafor, Analysis of support vector machine and random forest models for predicting the scalability of a broadband network , Journal of the Nigerian Society of Physical Sciences: Volume 6, Issue 3, August 2024

You may also start an advanced similarity search for this article.

Most read articles by the same author(s)

- Raphael Ozighor Enihe, Rajesh Prasad, Francisca Nonyelum Ogwueleka, Fatimah Binta Abdullahi, The effect of imbalance data mitigation techniques on cardiovascular disease prediction , Journal of the Nigerian Society of Physical Sciences: Volume 7, Issue 2, May 2025