Feature-optimized hybrid CNN–ViT architecture for sustainable vision-based condition assessment in agriculture

Keywords:

Plant disease detection, Hybrid CNN–ViT, Multi-crop classification, Vision transformer, Feature engineeringAbstract

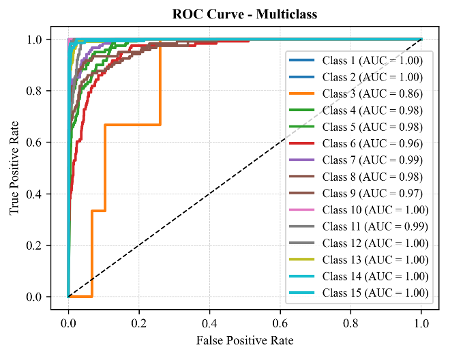

Detecting structural and physiological changes in plants earlier is a difficult challenge for computer vision, due to large intra-class variation and environmental noise. This paper integrates feature enhancement using vegetation indices (ExG, ExR) with feature compression using Principal Component Analysis (PCA) and an asymmetric CNN-ViT fusion architecture for plant disease classification with multi-crop inputs. Preprocessing consists of extracting vegetation indices (ExG and ExR), performing statistical normalization, and applying PCA-based feature compression to both enhance discriminative ability and reduce redundant spectral information. In particular, the CNN component generates hierarchical texture encoding, while the ViT component produces self-attention encoding, which is better suited for capturing global associations. The complementary feature spaces are combined via a cross-domain fusion layer, thereby improving representation capability. The proposed system achieves very high classification accuracy (98%) and robustness across multiple crop datasets. While there are still aspects of edge efficiency and explainability that need to be addressed before the model can be deployed in a real-world agricultural scenario, these are outlined as future work.

Published

How to Cite

Issue

Section

Copyright (c) 2026 Muhammad Musa Liman, Rajesh Prasad, Hauwa Ahmad Amshi (Author)

This work is licensed under a Creative Commons Attribution 4.0 International License.

How to Cite

Similar Articles

- Santosh Kumar Upadhyay, Rajesh Prasad, Efficient-ViT B0Net: A high-performance light weight transformer for rice leaf disease recognition and classification , Journal of the Nigerian Society of Physical Sciences: Volume 7, Issue 4, November 2025

- Ebere Uzoka Chidi, Edward Anoliefo, Collins Udanor, Asogwa Tochukwu Chijindu, Lois Onyejere Nwobodo, A blind navigation guide model for obstacle avoidance using distance vision estimation based YOLO-V8n , Journal of the Nigerian Society of Physical Sciences: Volume 7, Issue 1, February 2025

- A. E. Ibor, D. O. Egete, A. O. Otiko, D. U. Ashishie, Detecting network intrusions in cyber-physical systems using deep autoencoder-based dimensionality reduction approach anddeep neural networks , Journal of the Nigerian Society of Physical Sciences: Volume 7, Issue 3, August 2025

- Oluwaseun IGE, Keng Hoon Gan, Ensemble feature selection using weighted concatenated voting for text classification , Journal of the Nigerian Society of Physical Sciences: Volume 6, Issue 1, February 2024

- Christopher Ifeanyi Eke, Kholoud Maswadi, Musa Phiri, Mulenga Mwege, Mohammad Imran, Dekera Kenneth Kwaghtyo, Akeremale Olusola Collins, Effective tweets classification for disaster crisis based on ensemble of classifiers , Journal of the Nigerian Society of Physical Sciences: Volume 7, Issue 3, August 2025

- Gabriel James, Ifeoma Ohaeri, David Egete, John Odey, Samuel Oyong, Enefiok Etuk, Imeh Umoren, Ubong Etuk, Aloysius Akpanobong, Anietie Ekong, Saviour Inyang, Chikodili Orazulume, A fuzzy-optimized multi-level random forest (FOMRF) model for the classification of the impact of technostress , Journal of the Nigerian Society of Physical Sciences: Volume 7, Issue 3, August 2025

- Mokhtar Ali, Abdelkerim Souahlia, Abdelhalim Rabehi, Mawloud Guermoui, Ali Teta, Imad Eddine Tibermacine, Abdelaziz Rabehi, Mohamed Benghanem , A robust deep learning approach for photovoltaic power forecasting based on feature selection and variational mode decomposition , Journal of the Nigerian Society of Physical Sciences: Volume 7, Issue 3, August 2025

- Catherine N. Ogbizi-Ugbe, Osowomuabe Njama-Abang, Samuel Oladimeji, Idongetsit E. Eteng, Edim A. Emanuel, Synergistic intelligence: a novel hybrid model for precision agriculture using k-means, naive Bayes, and knowledge graphs , Journal of the Nigerian Society of Physical Sciences: Volume 8, Issue 1, February 2026

- Chuchu Liang, Majid Khan Majahar Ali, Lili Wu, A novel multi-class classification method for arrhythmias using Hankel dynamic mode decomposition and long short-term memory networks , Journal of the Nigerian Society of Physical Sciences: Volume 7, Issue 2, May 2025

- Nahid Salma, Majid Khan Majahar Ali, Raja Aqib Shamim, Machine learning-based feature selection for ultra-high-dimensional survival data: a computational approach , Journal of the Nigerian Society of Physical Sciences: Volume 7, Issue 3, August 2025

You may also start an advanced similarity search for this article.

Most read articles by the same author(s)

- Raphael Ozighor Enihe, Rajesh Prasad, Francisca Nonyelum Ogwueleka, Fatimah Binta Abdullahi, The effect of imbalance data mitigation techniques on cardiovascular disease prediction , Journal of the Nigerian Society of Physical Sciences: Volume 7, Issue 2, May 2025